tl;dr: I prefer an ORCID + figshare + Twitter/Google+ combination over services like ResearchGate and Academia.edu.

By 2009 I was taking social media pretty seriously, and with all those links to find, save, and share,

I put a lot of thought into social bookmarking. I was trying to figure out how to manage a bunch of overlapping options; at one time or another, I was using Delicious, Diigo, Pinboard, Google Reader, Facebook, Twitter, Google+ and FriendFeed. Some of those products had integrations (like sending links from Reader to Twitter) while simultaneously having overlapping functions (like following someone on Reader for the links you were also seeing them post to Twitter). New services popped up all the time, and others faded away or were killed off completely. It was kind of a mess, and it taught me some things about what I valued in my tools: (1) multiple small tools (but not too many) focused on being good at specific tasks could return greater total value than all-in-one solutions, and (2) openness is important, for financial reasons (both mine and the service), interoperability, and exportability.

The current landscape of tools and services for managing an online scholarly identity feels very reminiscent of those social bookmarking days. Part of that online identity includes general social media services, like Twitter and Facebook, but also a bunch of services for academics:

Academia.edu,

ResearchGate,

ORCID,

Google Scholar,

figshare,

SlideShare,

ResearcherID, and

Mendeley. I'm sure there are others.

My experience with social networks and bookmarking taught me I had three basic needs: establishing my identity, saving resources, and a place to follow and share with others. For my identity, I've used my own website as well as services like

about.me. For saving resources (links, mostly), I eventually ditched both Delicious and Diigo and went with

Pinboard. For following and sharing, I now stick mostly to

Twitter and

Google+, with less activity on Facebook and LinkedIn.

With a scholarly identity, I feel like I still have the same three basic needs: establishing my identity, saving resources, and a place to follow and share with others. In academia, your identity is often represented by your curriculum vitae and publication record, and there are ways of maintaining a CV online that go beyond just posting a PDF of the paper version. For saving resources, academics need a repository to save their slides, posters, handouts, pre-prints, and unpublished manuscripts. That leaves a place to follow and share with others. It could be an all-purpose social network, or it could be something more specialized. Here's how I see the services I mentioned playing out across these three needs:

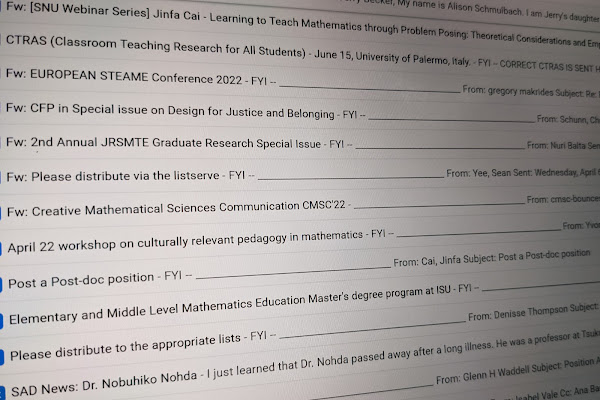

|

| My view of the online scholarly identity landscape |

Right away, you see two services, Academia.edu and ResearchGate, making the all-in-one play. They also happen to be mostly closed, profit-seeking services, which has

raised the eyebrows (and/or fists) of some academics. Mendeley is nearby but doesn't offer much as a social network. Google Scholar is a bit further from the center, as it lets you follow other academics and be notified about new publications, but offers no way to interact with other people. For the most part, these services — while certainly valuable in their own ways — go against my two criteria of simplicity and openness. Does that mean I don't use them? Actually, I have accounts and profiles on all four of these services, but I don't spend a lot of time on them and I'm very wary of the rights they want over my work. It's tricky to add a publication to your ResearchGate or Academia.edu profile without actually giving them the document, and if you manage to do it they'll hound you for the full-text version. So tricky is Academia.edu in this respect, I've decided to trust it with nothing. For more on the pros and cons of these services, I'll refer you to the post "

A Social Networking Site is Not an Open Access Repository" from the Univeristy of California.

That leaves me seeking out tools in the non-overlapping parts of my diagram. Here's what I've come to use most:

Identity: This is probably the easiest choice of them all. My

ORCID is like my CV, and its sole purpose is to give researchers a unique identity tied to their scholarly activities and outputs. (See this as

a list of ten things, if you prefer.) My profile is filled with a lot of things I entered manually, but when I published an article recently with Springer I gave them my ORCID and got two things: (1) the article was added to my ORCID profile automatically via CrossRef, and (2) I got a little ORCID badge on the article that links to my ORCID profile. Over time, this system is designed to make sure that no person or system confuses me with another Raymond Johnson, and all my works are tied together. I do pay attention to

my Google Scholar profile since it's such a widely used and useful search tool, but you can tell Google doesn't put a ton of resources behind it and I'm not sure how many people or services rely on the profile features.

Impactstory is a really cool thing that belongs in this category (nearest the center), but it really operates on top of, not instead of, ORCID and other services.

Repository: This is a tougher choice because in addition to having a repository that is stable and secure, there are also intellectual property rights to think about. I have tried hosting files on my own website, as many publishers allow academics to do. That puts a certain amount of responsibility on me, and I have to worry about registering domains, fixing broken links, having a stable URL structure, etc. I'm moving away from that and recently added all my slides and posters to figshare. While figshare does

give me a profile page, identity services are really not its thing. Figshare is about sharing all kinds of open-licensed scholarly outputs, including datasets, figures, posters, presentations, and documents. They give you a stable

DOI that (I assume) will always point to your file, even if figshare shuts down and someone else takes over the repository. figshare does have its rough edges, and through following their social media activity I can safely say that most of their attention is on behind-the-scenes integrations with services like ORCID,

ImpactStory, and APIs that are more for librarians than individual users. figshare also takes some commitment, as you have to choose one of a number of open licenses to post your work publicly (like

Creative Commons BY; I wish other CC licenses were available, but they're not), and once you post something

there's no delete button. It's not that you've given your property to figshare and they won't give it back; rather, you've licensed your property for the world to see and use and figshare is making sure the world can exercise that right. There are alternatives, like

SlideShare, but they're owned by

LinkedIn, more business-oriented, and not as open or integrated into academic services.

I currently have two documents that I'm not sharing on figshare and have instead chosen to use our university repository. I could put more on

scholar.colorado.edu and rely on the fact that it will probably operate so long as the university exists, but I can't continue to use it after I leave the university.

Social Network: While I get notifications about publications through Google Scholar and ResearchGate, I don't interact hardly at all with other people there. For that, I stick with Twitter and Google+. And that's fine with me, really, as I only have so much mental bandwidth to work with anyway. Once in a while I'll look at the Q&A on ResearchGate but rarely do I see a conversation I really want to jump into like I do routinely on my regular social media accounts.

By going with an ORCID + figshare + Twitter/Google+ combination I feel like I'm getting (and retaining) more value than I would with a single service like ResearchGate. It's a fair amount of work, though, and I'd recommend people not try to maintain too many identities at once. My Academia.edu profile is nearly empty because fewer education researchers I know are there, and it's just too much duplicate work to maintain it and ResearchGate, and my ResearchGate profile still doesn't include everything I list at ORCID. I think the rarer your name is, the less need there is for you to maintain a Google Scholar profile, and you can probably settle on just one repository for public posting of your work. I have a lot of confidence that ORCID will be around for the long haul, unlike some of these venture-funded services that will have to make a profit or likely be shut down. It's not easy to tell who might go the way of Google Reader or FriendFeed, but as we saw with those services, something new came along to replace them and it was easier if we weren't

too invested in any one tool.

Oh, and if all else fails, an up-to-date, ready-to-print

pdf of your CV is still not a bad thing to have handy.